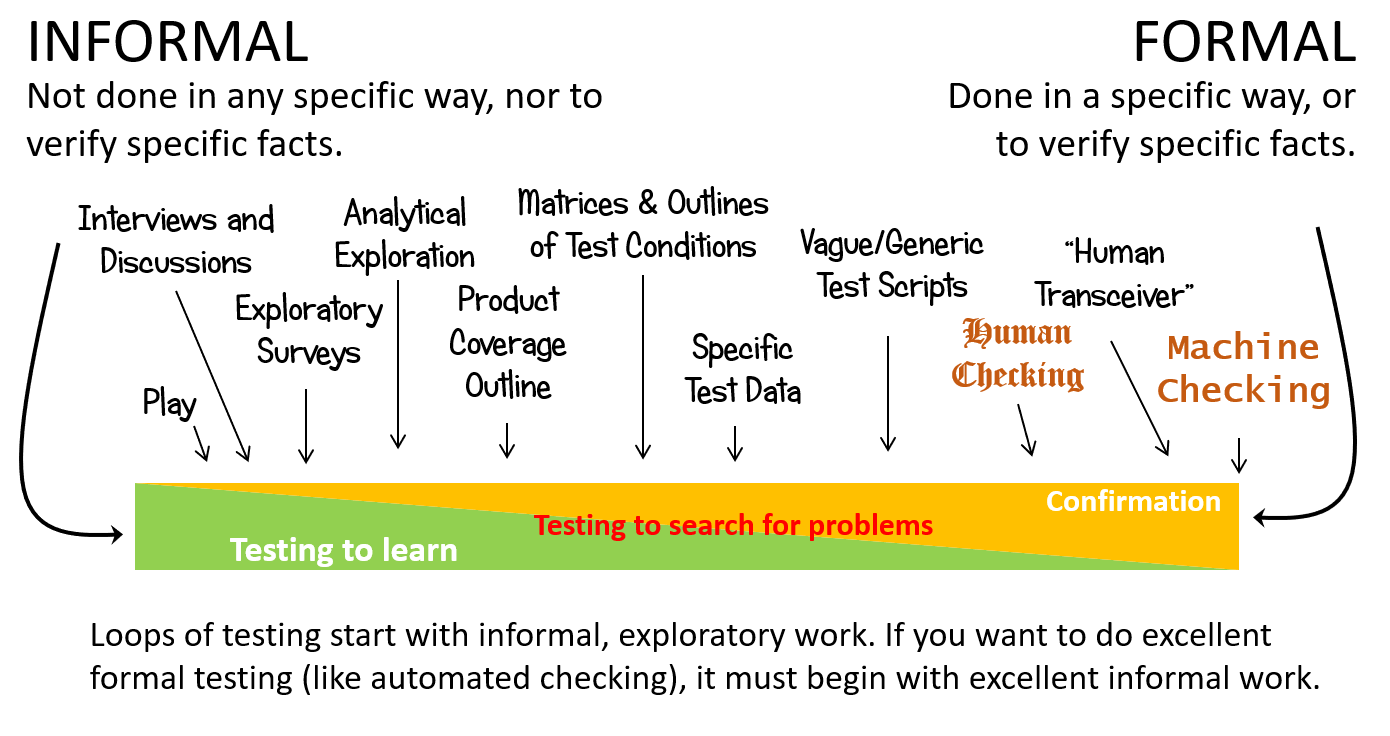

Formal testing is testing that must be done in a specific way, or to check specific facts. In the Rapid Software Testing methodology, we map the formality of testing on a continuum. Sometimes it’s important to do testing in a formal way, and sometimes it’s not so important.

From Rapid Software Testing. See http://www.satisfice.com/rst.pdf

People sometimes tell me that they must test their software using a particular formal approach—for example, they say that they must create heavily documented and highly prescriptive test cases. When I ask them why, they claim it’s because of “standards” or “regulations”. For example, Americans often refer to Public Law 107-204, also known as the Sarbanes-Oxley Act of 2002, or SOX. I ask if they’ve read the Act. “Well… no.”

If you’re citing Sarbanes-Oxley compliance as a reason for producing test scripts, I worry that someone is beating you—or you’re beating yourself, perhaps—with a rumour. Sarbanes-Oxley says nothing about software testing. In fact, it says nothing about software.

It does not say that you have to do software testing in any particular way. It certainly does not talk about test cases or test scripts. It does talk about testing, but only of “the auditor’s testing of the internal control structure and procedures of the issuer (of reports required by the SEC —MB)”. In this context, the word “testing” could easily be replaced by “scrutiny” or “evaluation” or “assessment”.

Don’t believe me? Read it: https://www.sec.gov/about/laws/soa2002.pdf. Search for the words “test cases”, or “test scripts”, or even “test”. What do you find?

All testing comes with several kinds of cost, including development cost, maintenance cost, execution cost, transfer cost, and—perhaps most importantly—opportunity cost. (Opportunity cost means, basically, that we won’t be able to do that potentially valuable thing because we’re doing this potentially valuable thing.)

Formality may provide a certain kind of value, but we must remain aware of its cost. Every moment that we spend on representing our test ideas as documentation, or on preparing to test in a specific way, or on encoding a testing activity, or on writing about something that we’ve done is a moment in which we’re not interacting directly with the product.

More formality can be valuable if it’s helping us learn, or if it’s helping us structure our work, or if it helps us to tell the testing story—all such that the value of the activity meets its cost. The key question to ask, it seems to me, is “is it helping?” If it’s not helping, is it necessary?—and what is the basis of the claim that it’s necessary? Asking such questions helps to underscore that many things we “have to” do are not obligatory, but choices.

Formal testing is often said to involve comparing the product to the requirements for it, based on the unspoken assumption that “requirements” means “documented requirements”. Certainly some requirements can be understood and documented before we begin testing the product. Other requirements are tacit, rather than explicit. (As a trivial example, I’ve never seen a requirements document that notes that the product must be installed on a working computer.)

Still other requirements are not well-understood before development begins; the actual requirements are discovered, revealed, and refined as we develop the product and our understanding of the problems that the product is intended to solve.

As my colleague James Bach has said, “Requirements are ideas at the intersection between what we want, what we can have and how we might decide that we got it. In complex systems that requires testing. Testing is how we learn difficult things.” That learning happens in tangled loops of development, experimentation, investigation, and decision-making. Our ideas may or may not be documented formally—or at all—at any specific point in time. Indeed, some things are so important that we never document them; we have to know them.

So it goes with testing. Some aspects of our testing will be clear from the outset of the project; other aspects of our testing will be discovered, invented, and refined as we develop and test the product. Excellent formal testing, as James says, must therefore rooted in excellent informal testing.

And who agrees with that? The Food and Drug Administration, for one, in the Agency’s “Design Considerations for Pivotal Clinical Investigations for Medical Devices“.

Few (in my experience, very few) of those people who insist on highly formal testing to comply with standards or regulations—or even with requirements documents—have read the relevant documents or examined them closely. In such cases, the justification for the formality is based on rumours and on going through the motions; the formality is premature, or excessively expensive, or unnecessary, or a distraction; and (in)consistency with the documentation and (non-)compliance with the regulations becomes accidental. At its worst, the testing falls into what James calls “pathetic compliance”, following “the rules” so we don’t “get in trouble”—a pre-school level of motivated behaviour.

As responsible testers, it is our obligation to understand what we’re testing, why we’re testing it, and why we’re testing it in a particular way. If we’re responsible for testing to comply with a particular standard, we must learn that standard, and that means studying it, understanding it, and relating it to our product and to our testing work. That may sound like a lot of work, and often it is. Yet our choice is to do that work, or to run the risk of letting our testing be structured and dictated to us by a sock puppet. Or a SOX puppet.

Photo credit: iStockPhoto

Further reading: Breaking the Test Case Addiction (and subsequent posts)

Hi Michael, I couldn’t agree more on this one. I set up a SOX compliance program at a major Australian bank almost 10 years ago and spent several months getting to understand the guidance. It meant a serious amount of work with auditors, accountants and lawyers to be able to impelement a workable and non-invasive framework.

Unlike many Testers I’ve met I actually enjoy working on compliance programs. When you get them right the sense of achievement is usually well in excess of a standard strategic business endeavour!!

Great article.

[…] The Sock Puppets of Formal Testing Written by: Michael Bolton […]

[…] Blog: The Sock Puppets of Formal Testing – Michael Bolton – http://www.developsense.com/blog/2014/07/sock-puppets-of-formal-testing/ […]

[…] from a tester at Barclays Bank) that the “exploratory-scripted continuum” is actually the “formality continuum.” In other words, to formalize an activity means to make it more […]

It would be nice if there was a formal or some what formal definition of what each term means on the testing formality continuum. There isn’t a definition in the rst.pdf.

Michael replies: Good idea. Not done yet, but it’s on the list. Thanks!